TRENDING IN INFORMATION TECHNOLOGY

What is information technology about?

What are some of the latest trends in information technology?

The current world is techno-centric more than ever. The rapidly expanding information sector has left a huge disparity between where the world is heading and the approaches businesses are employing to run their operations. The challenges to businesses are therefore phenomenal especially considering the fact the IT industry is undergoing a tectonic shift in technology. Different aspects of the computing landscape are changing at the same time including communication, delivery platforms and collaboration channels. With the information technology sector, technological innovations are short-lived as they are frequently changing with time. Nothing lasts forever.

The current world is techno-centric more than ever. The rapidly expanding information sector has left a huge disparity between where the world is heading and the approaches businesses are employing to run their operations. The challenges to businesses are therefore phenomenal especially considering the fact the IT industry is undergoing a tectonic shift in technology. Different aspects of the computing landscape are changing at the same time including communication, delivery platforms and collaboration channels. With the information technology sector, technological innovations are short-lived as they are frequently changing with time. Nothing lasts forever.

Information technology (IT) is the application of computers and telecommunications equipment to store, retrieve, transmit and manipulate data, often in the context of a business or other enterprise. The term is commonly used as a synonym for computers and computer networks, but it also encompasses other information distribution technologies such as television and telephones. Several industries are associated with information technology, including computer hardware, software, electronics, semiconductors, internet, telecom equipment, e-commerce and computer services.

Humans have been storing, retrieving, manipulating and communicating information since the Sumerians in Mesopotamia developed writing around3000 BC, but the term information technology in its modern sense first appeared in a 1958. This term consists of three categories: techniques for processing, the application of statistical and mathematical methods to decision-making, and the simulation of higher-order thinking through computer programs.

Devices have been used to aid computation for thousands of years, probably initially in the form of a tally stick. The Antikythera mechanism is considered to be the earliest known mechanical analog computer, and the earliest known geared mechanism. Comparable geared devices did not emerge in Europe until the 16th century, and it was not until 1645 that the first mechanical calculator capable of performing the four basic arithmetical operations was developed.

Electronic computers, using either relays or valves, began to appear in the early 1940s. The electromechanical Zuse Z3, completed in 1941, was the world’s first programmable computer, and by modern standards one of the first machines that could be considered a complete computing machine. Colossus, developed during the Second World War to decrypt German messages was the first electronic digital computer. Although it was programmable, it was not general-purpose, being designed to perform only a single task. It also lacked the ability to store its program in memory; programming was carried out using plugs and switches to alter the internal wiring

The development of transistors in the late 1940s at Bell Laboratories allowed a new generation of computers to be designed with greatly reduced power consumption. The first commercially available stored-program computer, the Ferranti Mark I, contained 4050 valves and had a power consumption of 25 kilowatts. By comparison the first transistorized computer, developed at the University of Manchester and operational by November 1953, consumed only 150 watts in its final version. Early electronic computers such as Colossus made use of punched tape, a long strip of paper on which data was represented by a series of holes, a technology now obsolete. Electronic data storage, which is used in modern computers, dates from the Second World War, when a form of delayed line memory was developed to remove the clutter from radar signals, the first practical application of which was the mercury delay line. The first random-access digital storage device was the Williams tube, based on a standard cathode ray tube, but the information stored in it and delay line memory was volatile in that it had to be continuously refreshed, and thus was lost once power was removed.

IBM introduced the first hard disk drive in 1956, as a component of their 305 RAMAC computer system. Most digital data today is still stored magnetically on hard disks, or optically on media such as CD-ROMS. Until 2002 most information was stored on analog devices, but that year digital storage capacity exceeded analog for the first time. As of 2007 almost 94% of the data stored worldwide was held digitally: 52% on hard disks, 28% on optical devices and 11% on digital magnetic tape. It has been estimated that the worldwide capacity to store information on electronic devices grew from less than 3 exabytes in 1986 to 295 exabytes in 2007, doubling roughly every 3 years.

Database management systems emerged in the 1960s to address the problem of storing and retrieving large amounts of data accurately and quickly. One of the earliest such systems was IBM’s Information Management System (IMS), which is still widely deployed more than 40 years later, IMS stores data hierarchically. The first commercially available relational database management system (RDBMS) was available from Oracle in 1980.

All database management systems consist of a number of components that together allow the data they store to be accessed simultaneously by many users while maintaining its integrity. A characteristic of all databases is that the structure of the data they contain is defined and stored separately from the data itself, in a database schema.

The terms “data” and “information” are not synonymous. Anything stored is data, but it only becomes information when it is organized and presented meaningfully. Most of the world’s digital data is unstructured, and stored in a variety of different physical formats even within a single organization. Data warehouses began to be developed in the 1980s to integrate these disparate stores. They typically contain data extracted from various sources, including external sources such as the Internet, organized in such a way as to facilitate decision support systems (DSS).

Data transmission has three aspects: transmission, propagation, and reception. It can be broadly categorized as broadcasting, in which information is transmitted uni-directionally downstream, or telecommunications, with bidirectional upstream and downstream channels.

XML has been increasingly employed as a means of data interchange since the early 2000s, particularly for machine-oriented interactions such as those involved in web-oriented protocols such as SOAP, describing “data-in-transit rather than data-at-rest”. One of the challenges of such usage is converting data from relational databases into XML Document Object Model (DOM) structures.

Massive amounts of data are stored worldwide every day, but unless it can be analyzed and presented effectively it essentially resides in what have been called data tombs: “data archives that are seldom visited”. To address that issue, the field of data mining – “the process of discovering interesting patterns and knowledge from large amounts of data” – emerged in the late 1980s.

In an academic context, the Association for Computing Machinery defines IT as “undergraduate degree programs that prepare students to meet the computer technology needs of business, government, healthcare, schools, and other kinds of organizations IT specialists assume responsibility for selecting hardware and software products appropriate for an organization, integrating those products with organizational needs and infrastructure, and installing, customizing, and maintaining those applications for the organization’s computer users.”

In a business context, information technology has been defined as “the study, design, development, application, implementation, support or management of computer-based information systems”. The responsibilities of those working in the field include network administration, software development and installation, and the planning and management of an organization’s technology life cycle, by which hardware and software are maintained, upgraded and replaced. The business value of information technology lies in the automation of business processes, provision of information for decision making, connecting businesses with their customers, and the provision of productivity tools to increase efficiency.

So what are the latest trends in information technology? Next generation mobile devices and mobile apps: They are the smart phones and tablets. The different varieties of smart mobile devices incorporate mobile applications such as iOS, Androids, Symbian OS, webos, Windows Phone and the Blackberry OS/QNX. Their usage is already increasing globally and is quickly replacing traditional handsets. The next generation mobile devices are slowly gaining momentum with the sale of PCs. Within a few years’ time, the sales will have leveled. Mobile applications are increasingly being used in marketing strategies such as mobile affiliate marketing.

Social media – The world is increasingly using social networking sites to stay in touch and communicate. The focus of enterprise marketing has now shifted to the use of social media for promotion of products and services. Organizations are now becoming social enterprises. Social media has provided a platform for business to directly access a global audience. Businesses are employing social media marketing due to its affordability compared to traditional marketing strategies. Social network is quickly shaping the direction of society and business.

Social media – The world is increasingly using social networking sites to stay in touch and communicate. The focus of enterprise marketing has now shifted to the use of social media for promotion of products and services. Organizations are now becoming social enterprises. Social media has provided a platform for business to directly access a global audience. Businesses are employing social media marketing due to its affordability compared to traditional marketing strategies. Social network is quickly shaping the direction of society and business.

Cloud computing – It is certainly one of the most sophisticated of the latest trends in information technology. Cloud computing provides services such as software, computation, data access and storage services without the end- user knowing the knowledge of the physical location and the configuration of the system that provides the service. It is especially effective in cutting running costs for business for data storage and other operation costs. Data- centers are now being down- sized to pave way for cloud storage. Cloud computing also has in- built scalability and elasticity features which can efficiently guide the growth of businesses.

Cloud computing – It is certainly one of the most sophisticated of the latest trends in information technology. Cloud computing provides services such as software, computation, data access and storage services without the end- user knowing the knowledge of the physical location and the configuration of the system that provides the service. It is especially effective in cutting running costs for business for data storage and other operation costs. Data- centers are now being down- sized to pave way for cloud storage. Cloud computing also has in- built scalability and elasticity features which can efficiently guide the growth of businesses.

Consumerization of Information Technology – Technological innovation is actually driven by the consumer world. More mobile applications are increasingly being built for the purpose of mobile users but not for the replacement of computer applications. The days of monolithic suits are slowly fading away and are being taken over by applications meant specifically for mobile tablets and smart phones.

Consumerization of Information Technology – Technological innovation is actually driven by the consumer world. More mobile applications are increasingly being built for the purpose of mobile users but not for the replacement of computer applications. The days of monolithic suits are slowly fading away and are being taken over by applications meant specifically for mobile tablets and smart phones.

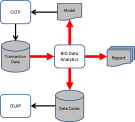

Big data/ analytics and patterns – As companies continue to drown in unstructured data which they hardly access;  innovations like the SLDF are being incorporated in order to manage data. There are different kinds of SLDF which include waterfall and the Agile Development Methodology. Some of the features of ADM include continuous integration of data, pair programming, offering spike solutions and refactoring. The waterfall is more traditional but is being fast replaced by the Agile Development Methodology systems. Other effective systems of data management include technologies such as in- line duplication, flash or solid- state drives and automated tiering of data.

innovations like the SLDF are being incorporated in order to manage data. There are different kinds of SLDF which include waterfall and the Agile Development Methodology. Some of the features of ADM include continuous integration of data, pair programming, offering spike solutions and refactoring. The waterfall is more traditional but is being fast replaced by the Agile Development Methodology systems. Other effective systems of data management include technologies such as in- line duplication, flash or solid- state drives and automated tiering of data.

Resource management – Servers are being virtualized which benefits businesses in reducing work load management.  Data centers are moving towards smaller sizes but with greater density for data storage, i.e. creation of infinite data centers. Virtualization enables the improvement of vertically scale data centers. Its use optimizes server performance hence creating more floor space and saving on energy. New scripting languages: They include Java and .NET. Some of the features and benefits of .NET include a fast turnaround time, a simpler AJAX implementation, and a single framework that handles a variety of operations. There is therefore no need for multiple frameworks from different vendors in order to perform different functionalities. It is also better funded thus, enabling new features to come out at the fastest pace possible. Some of the features integrated into the platform include LINQ, AJAX, the Unit Testing Framework, Performance Profiler, and Client Side Reporting among various other features. Java is quite similar to .NET in features and benefits.

Data centers are moving towards smaller sizes but with greater density for data storage, i.e. creation of infinite data centers. Virtualization enables the improvement of vertically scale data centers. Its use optimizes server performance hence creating more floor space and saving on energy. New scripting languages: They include Java and .NET. Some of the features and benefits of .NET include a fast turnaround time, a simpler AJAX implementation, and a single framework that handles a variety of operations. There is therefore no need for multiple frameworks from different vendors in order to perform different functionalities. It is also better funded thus, enabling new features to come out at the fastest pace possible. Some of the features integrated into the platform include LINQ, AJAX, the Unit Testing Framework, Performance Profiler, and Client Side Reporting among various other features. Java is quite similar to .NET in features and benefits.

Fabrics – This is the vertical integration of server systems, network and storage systems along with components that have element- level management software which lays the foundation that can optimize shared data resources effectively and dynamically. Systems that are incorporating this feature are Cisco and HP which use it to unify network control.

element- level management software which lays the foundation that can optimize shared data resources effectively and dynamically. Systems that are incorporating this feature are Cisco and HP which use it to unify network control.

Kathy Kiefer